AI in the Hiring Process: Benefits, Risks & Step-by-Step Implementation Guide (2026)

43% of organizations used AI for HR tasks in 2026, up from 26% in 2024 (SHRM). 64% of companies using HR AI apply it specifically to recruiting - making talent acquisition the primary entry point for enterprise AI adoption. The pitch is compelling: faster screening, better matching, lower cost-per-hire. The reality is more complicated.

AI in the hiring process delivers real efficiency gains, but it also introduces bias risks, legal obligations, and candidate trust problems that most implementation guides gloss over. This article covers how ai in hiring and recruiting actually works across the funnel, what the measurable benefits and risks look like, what compliance requirements apply in 2025, and a six-step framework for implementing it responsibly. Platforms like HackerEarth apply AI specifically to skills-based technical assessments - one of the highest-signal, lowest-risk applications covered here.

What Is AI in Hiring - and Why Does It Matter Now?

Defining AI in the Hiring Context

"AI in hiring" covers a wider spectrum than most vendors admit, and conflating the categories leads to buying the wrong tools. At one end is rule-based automation - fixed logic like auto-rejecting applications missing a required field. In the middle is machine learning, which improves from data patterns to score resumes or predict fit. At the far end is generative AI - large language models that draft job descriptions, generate outreach, or summarize interview notes. Most platforms market themselves as "AI-powered" while running rule-based logic; when evaluating any tool, ask which layer it operates at, what data trained it, and how it explains its outputs.

Key Market Drivers in 2025

Three pressures are making adoption urgent rather than optional. AI screening reduces time-to-shortlist by up to 40% and automation adopters fill 64% more jobs per recruiter (Eightfold AI and Indeed/Bluehorn, 2024-2025). AI reduces cost-per-hire by up to 30% at scale (DemandSage, 2025). And 65% of hiring managers have now caught candidates using AI deceptively in applications (High5Test, 2026) - making resume credentials even less reliable and skills-based assessment more necessary.

(Visual callout: "AI Hiring at a Glance" - 43% of orgs use AI for HR; 64% apply it to recruiting; 40% faster time-to-shortlist; 30% cost-per-hire reduction.)

How Is AI Used in the Hiring Process?

How is ai used in hiring in practice? AI in hiring and recruiting now touches every funnel stage:

- Job description optimization: NLP tools remove biased language and improve keyword targeting

- Candidate sourcing and outreach: AI searches databases and drafts personalized messages

- Resume screening and shortlisting: ML-based parsing ranks applicants against role criteria

- Skills assessments and coding tests: AI administers, grades, and proctors technical evaluations

- Interview scheduling and chatbots: Conversational AI handles calendar coordination and candidate Q&A

AI for Job Description Optimization

This is one of the lowest-risk, highest-ROI places to start - the tool never touches a candidate, just the text that attracts them. AI-generated job descriptions reduce time-to-publish by approximately 40% and decrease biased language by 25 to 50% (LinkedIn Talent Solutions, 2025), with measurable downstream impact on applicant diversity for technical roles.

AI for Candidate Sourcing and Outreach

AI sourcing cuts time on top-of-funnel prospecting by approximately 50% (Fetcher, 2024-2025) and AI-personalized outreach increases positive response rates by 5 to 12% (LinkedIn Talent Solutions, 2025). The limitation worth stating plainly: these tools surface candidates who look like your past hires, which reinforces existing team homogeneity unless you actively counterbalance it.

AI for Resume Screening and Shortlisting

This is simultaneously the most widely used and most legitimately criticized AI hiring application. 56% of companies use AI for screening (DemandSage), but keyword-matching logic rejects qualified candidates who describe skills differently - a senior engineer who writes "built distributed systems" may score below someone who wrote the phrase verbatim. The communities calling it "keyword matching on steroids" are not entirely wrong about the weaker implementations.

AI for Skills-Based Assessments and Coding Tests

This is where AI produces its clearest signal in technical hiring, because it tests what candidates can actually do instead of predicting it from resume proxies. HackerEarth administers AI-proctored coding assessments across 40-plus programming languages and 1,000-plus skills, with automated scoring that removes both human inconsistency and keyword-matching limitations. A candidate either solves the problem or does not - that output is objective and defensible in a way that resume ranking scores simply are not.

See how HackerEarth's AI-powered coding assessments help you evaluate developer skills objectively - [Request a Free Demo]

AI for Interview Scheduling and Chatbots

Conversational AI reduces candidate response times from 7 days to under 24 hours (Paradox/Olivia, 2025), and 40% of firms used AI chatbots with candidates in 2024 (NYSSCPA). This is where the ATS black hole gets solved: automated communication ensures no application disappears without acknowledgment.

AI for Video Interview Analysis

AI sentiment and facial expression analysis in video interviews is technically possible and legally hazardous - several active discrimination lawsuits name these tools specifically. Treat this application as requiring legal review before deployment, not a standard hiring workflow.

(Visual callout: Comparison table - "AI vs. Manual Processes Across the Hiring Funnel" covering time saved, accuracy, and risk level per stage.)

Benefits of AI in Hiring and Recruiting

Speed and Efficiency Gains

Automation adopters fill 64% more jobs and submit 33% more candidates per recruiter than non-adopters (Indeed/Bluehorn, 2024). The practical outcome is that hiring managers review fewer applications, but better ones.

Cost Reduction

Companies using AI in recruitment reduce cost-per-hire by up to 30% (DemandSage, 2025), driven by reduced agency dependency, lower job board spend, and fewer unqualified interviews consuming hiring manager time.

Improved Quality of Hire

Candidates selected through AI processes are 14% more likely to receive an offer than those selected by manual screening (Forbes/Carv). For technical roles, skills-based assessments produce the strongest quality signal because they evaluate demonstrated ability rather than claimed credentials.

Enhanced Candidate Experience

79% of candidates want transparency when AI is used in their evaluation (HireVue, 2024-2025). Faster responses and automated status updates improve satisfaction - but only when the AI is disclosed, which most candidates currently do not realize has happened.

Scalability for High-Volume Hiring

Campus drives and hackathon-based recruiting that require evaluating thousands of candidates become operationally feasible with automated grading and proctoring. HackerEarth's hackathon platform sources and evaluates passive technical talent at scale, turning a months-long manual sourcing exercise into a structured, measurable pipeline event.

(Visual callout: Risk-benefit matrix - 2x2 grid showing benefit magnitude vs. implementation complexity for each AI use case.)

AI Bias in Hiring: Risks and Ethical Concerns

Bias is the section most AI vendor content buries - which is exactly why it belongs near the front of any honest implementation guide.

How AI Bias Enters the Hiring Pipeline

AI systems learn from historical data, so if your past hiring decisions favored certain backgrounds or demographic profiles, the AI replicates those preferences at scale. Amazon's internal resume screener - trained on a decade of male-dominated applications - learned to penalize references to women's colleges; Amazon abandoned it. A Stanford study from October 2025 found AI screening tools still rated older male candidates higher than female candidates with identical qualifications. The bias does not cut one direction; it reflects whatever patterns existed in the training data.

Transparency, Explainability, and Privacy

Black-box AI hiring tools cannot explain why a specific applicant ranked where they did - and humans reviewing AI recommendations accept them without challenge approximately 90% of the time (NYC compliance research). This is both a governance failure and a legal exposure: the EU AI Act and NYC Local Law 144 both require explainable outputs and audit trails. Separately, video interview tools, behavioral assessments, and keystroke monitoring collect biometric data subject to GDPR and CCPA - before deploying any tool capturing video or audio, document what is collected, how long it is retained, and how candidates are notified.

The Risk of Over-Automation

The r/humanresources communities raise this correctly: fully automated screening produces fully automated errors at scale. AI-assisted, human-decided is the only configuration that lets you catch the tool's mistakes before they compound into discriminatory patterns.

AI Hiring Laws and Compliance: What HR Teams Must Know in 2025

The legal landscape is specific, enforceable, and expanding faster than most HR teams realize.

NYC Local Law 144 (Automated Employment Decision Tools)

In effect since January 2023 and enforced since July 2023, NYC LL 144 requires annual bias audits by independent third-party auditors, public posting of audit results, and candidate notification at least 10 business days before an AEDT is used - for any role performed in New York City, including remote roles associated with an NYC location. Penalties reach $1,500 per day per violation. A December 2025 audit by the NY State Comptroller found enforcement weak due to self-reporting challenges, but that does not reduce employer legal exposure.

EU AI Act - High-Risk Classification for Hiring AI

The EU AI Act classifies AI used in employment decisions as high-risk, triggering obligations for technical documentation, decision logging, human oversight by at least two qualified individuals, and conformity assessments before deployment. Partial effect began February 2025; full effect is August 2026. It applies to any company using these tools to evaluate EU-based candidates, regardless of where the employer is headquartered.

EEOC Guidance and Federal Landscape

The EEOC's 2023 guidance confirmed that Title VII anti-discrimination law applies to AI hiring tools, and a 2025 federal case (Mobley v. Workday) ruled that AI tools can be treated as "agents" of the employer - raising the stakes for vendor due diligence. State-level laws are accelerating: Illinois AI Video Interview Act requires candidate consent for AI video analysis; Colorado AI Act takes effect June 2026; California regulations effective October 2025 require four-year retention of AI decision records.

Building a Compliance Checklist

- Inventory every AI tool in your hiring workflow and determine whether it qualifies as an AEDT under applicable law.

- Engage an independent third-party auditor for annual bias audits; do not rely on vendor-provided reports.

- Implement candidate disclosure notices covering what tool is used, what data it collects, and how it affects evaluation.

- For video or behavioral tools, obtain explicit opt-in consent and document retention and deletion policies.

- Ensure all AI tools produce explainable outputs - if you cannot justify a ranking to a regulator, the tool is a liability.

- Establish a quarterly internal review cadence; annual audits are the legal minimum, not the operational standard.

- Brief your legal team on state-specific obligations if you hire in NY, IL, CO, or CA.

(Visual callout: Downloadable compliance checklist graphic.)

How to Implement AI in Your Hiring Process - A Step-by-Step Framework

Most content on how to use ai in hiring stops at benefits and risks. This section is the roadmap.

Step 1 - Audit Your Current Hiring Workflow

Map your current process stage by stage and identify where candidates drop off, where recruiter time disappears, and where decision quality varies most. AI applied to the wrong bottleneck produces efficiency in the wrong place.

Step 2 - Define Clear Objectives and KPIs

Name the specific outcome you are improving before selecting a tool - reduce time-to-shortlist by 30%, increase diversity of technical shortlists by 20%, decrease unqualified first-round interviews by 40%. Without a defined KPI, you cannot tell whether the AI is working or quietly causing harm.

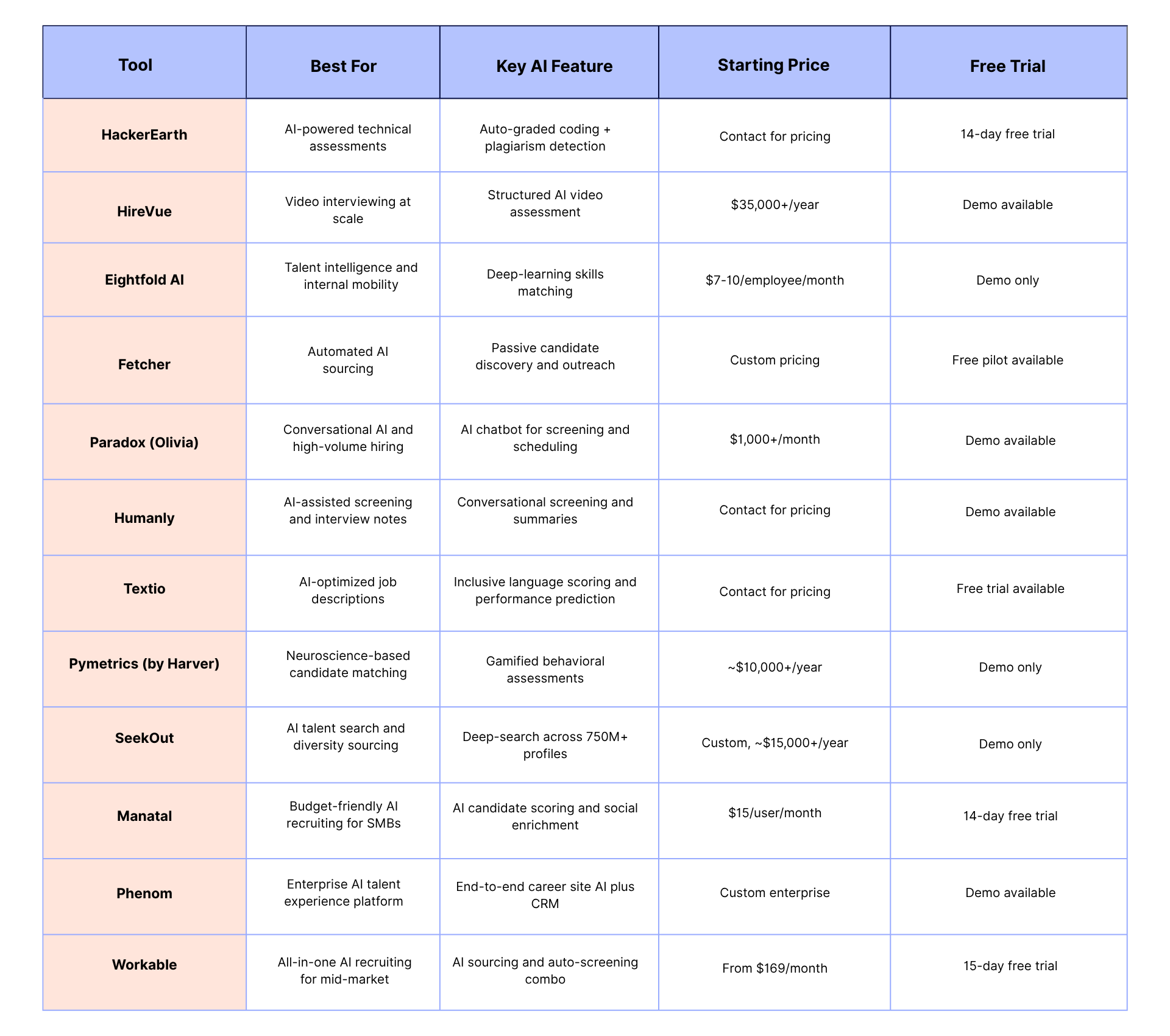

Step 3 - Select the Right AI Tools for Each Stage

Match tool category to the bottleneck: NLP writing tools for job descriptions, AI talent search for passive sourcing, ML-based ATS with explainable scoring for resume screening, HackerEarth for technical evaluation, conversational AI for scheduling. The platforms best at one stage are rarely best at all of them.

Step 4 - Run a Controlled Pilot

Start with one role family or one hiring stage, tracking KPIs against a control group. A pilot of 30 to 50 candidates produces enough data to evaluate signal quality and test candidate notification workflows before they apply at full volume.

Step 5 - Train Your Hiring Team

Without training, hiring managers rubber-stamp AI recommendations - which is exactly how bias amplification becomes a legal problem. Recruiters need to know how to read AI outputs, flag anomalies, and document the cases where they override the tool.

Step 6 - Monitor, Audit, and Iterate

Set a quarterly review cadence to examine pass rates by demographic group and candidate experience scores. HackerEarth's built-in analytics surface assessment performance by candidate cohort, giving HR generalists visibility into whether the evaluation process is producing equitable outcomes before the annual audit requires them to prove it.

The Future of AI in Hiring: Trends to Watch

Understanding the future of ai in hiring matters now because the tools and regulations shaping the next two years are already in early deployment.

Generative AI for Hyper-Personalized Candidate Journeys

Generative AI is moving from drafting job descriptions to contextual personalization across the full candidate journey - career site content, chatbot responses, and offer communications that adapt to individual profiles. This will become standard practice for competitive employers within 12 to 18 months.

Agentic AI and Autonomous Recruiting Workflows

Agentic AI systems that orchestrate multi-step hiring tasks end-to-end are moving from experimental to early adoption. LinkedIn's first true AI recruiter agent, launched in 2024, drafts job descriptions, sources candidates, and initiates outreach as a sequential workflow - what used to take a sourcer a full day now runs in the background.

Skills Ontologies and Dynamic Job Matching

AI is increasingly able to map transferable skills across roles, identifying that a candidate's experience in one domain covers requirements in another they would never have thought to apply for. This directly supports the skills-first movement by reducing dependence on job title matching and credential proxies.

Regulatory Evolution and Responsible AI as a Competitive Advantage

The EU AI Act, California, Colorado, and Illinois have all established enforceable AI hiring obligations in the last 18 months. Companies that invest in transparent, auditable AI practices now will face lower legal exposure and stronger candidate trust than those treating compliance as a future problem.

Frequently Asked Questions

How is AI used in the hiring process?

AI in hiring spans five stages: job description optimization, candidate sourcing, resume screening, skills-based assessments, and interview scheduling - with 64% of organizations that use HR AI applying it specifically to recruiting (SHRM, 2025). Skills assessments carry the strongest signal quality and lowest bias risk; fully automated resume rejection carries the highest.

How does AI reduce bias in the hiring process?

Properly designed AI reduces bias by applying consistent evaluation criteria to every candidate and enabling blind assessment formats that remove identity signals - HackerEarth's coding assessments evaluate code quality alone. The caveat that never appears in vendor marketing: AI trained on historically biased data replicates those biases at scale, so bias reduction requires ongoing audit, not just initial design.

What are the legal risks of using AI in hiring?

NYC Local Law 144 requires annual independent bias audits and candidate notification with penalties reaching $1,500 per day; the EU AI Act classifies hiring AI as high-risk effective August 2026; California, Colorado, and Illinois each have separate, enforceable requirements. The legal landscape is expanding state by state faster than most HR teams are tracking it.

How are companies using AI in the hiring process in 2025?

43% of organizations used AI for HR tasks in 2025 (SHRM), up from 26% the prior year. Unilever used AI video analysis and gamified assessments to screen 250,000 applicants per year, cutting time-to-hire by 75%; HackerEarth customers run AI-proctored assessments and hackathons that cut cost-per-hire for technical roles by more than 75%. The consistent pattern in successful deployments is AI for volume and initial filtering, humans for relationships and final decisions.

Will AI replace human recruiters?

No - 74% of candidates still prefer human interaction for final hiring decisions even as they accept AI assistance in earlier stages (Insight Global, 2025). The stages where AI adds the most value are exactly the stages where recruiters least want to spend time; the stages where human judgment is irreplaceable - offer negotiation, cultural fit, hiring manager alignment - are where recruiters add the most value.

Conclusion

The efficiency case for AI in hiring is real: faster screening, lower cost-per-hire, and better quality signals for technical roles. So is the risk: bias amplified at algorithmic speed, legal exposure growing as regulation matures, and the genuine harm of automated rejection for candidates who deserved a human look.

The companies that get this right treat AI as the narrowing layer and humans as the deciding layer - and invest specifically in tools, like HackerEarth's skills-based assessments, where the AI evaluates demonstrated ability rather than predicting it from proxies that have always been unreliable.

Ready to remove guesswork from technical hiring? Start your free trial of HackerEarth's assessment platform and experience AI-driven candidate evaluation firsthand.

.png)