Technical Skills Test for Hiring: How to Evaluate Developers Accurately

A technical skills test for hiring is the most direct way to separate developers who can do the job from those who interviewed well for it. Right now that distinction matters more than ever. The U.S. Bureau of Labor Statistics projects software developer employment will grow 15% from 2024 to 2034, while 76% of companies already report facing a direct tech talent shortage. AI/ML roles average 89 days to fill. Technical positions in general take about 66 days, roughly 50% longer than non-technical roles.

The pressure to make accurate assessments fast is measurable and real. A technical assessment for hiring replaces resume-and-gut-feel screening with objective, role-relevant evidence that hiring teams at every technical depth can act on confidently.

What Is a Technical Skills Test for Hiring?

Definition and Purpose

Think of a technical skills test the way you would a work sample rather than an audition. It is a structured evaluation designed to measure whether a candidate can actually perform the technical work a role requires, under conditions that resemble real job tasks. McKinsey research confirms that hiring for skills is five times more predictive of job performance than hiring based on education and more than twice as effective as hiring based on work experience alone. A well-designed developer skills assessment converts that predictive advantage into a shortlist hiring managers can trust.

Why Traditional Screening Falls Short

Resume screening feels like a quality gate but functions more like a noise filter, and the problem is getting worse. With AI-generated resumes now flooding pipelines, surface polish has decoupled from underlying capability. Nearly 60% of bad hires occur because the employee could not produce the level of work the employer required. An IT skills assessment or programming test for hiring, positioned at the top of the funnel, is the most direct way to close that gap before it costs anything.

Types of Technical Assessments for Hiring

The format you choose determines what you actually learn about a candidate, and picking the wrong one at the wrong stage wastes everyone's time.

Coding Challenges (Algorithmic and Data Structures)

Algorithmic tests are the workhorse of early-stage technical screening because they scale to hundreds of candidates simultaneously with automated grading. The criticism is fair though: pure algorithmic challenges measure a narrower skill set than most real roles require, so use them as a first filter, not a final verdict.

Project-Based / Take-Home Assignments

Take-home projects surface the qualities that truly separate strong engineers from average ones: code organization, documentation habits, and edge case handling. Keep them under four hours, because anything longer starts selecting for availability rather than ability.

Multiple-Choice and Conceptual Knowledge Tests

For IT skills assessment in cloud, networking, or database roles, multiple-choice tests efficiently verify domain knowledge before investing in a live conversation. They should never be the primary evaluation tool for software engineering roles.

Pair Programming and Live Coding Sessions

A live coding session tells you more in 60 minutes than a stack of submitted exercises will, because you watch a candidate's thinking process in real time, not just the output. The cost is interviewer time, which is why this belongs at the final stage, not the first.

Full-Stack or Role-Specific Simulations

Role-specific simulations, such as debugging an actual API or extending a real component, are the gold standard for senior positions where a mis-hire is expensive. HackerEarth's real-world project simulations test code quality, logic, and technical depth against actual role demands rather than generic computer science theory.

How to Build an Effective Technical Screening Test - Step by Step

Step 1 - Define the Role's Core Technical Competencies

Before picking a format, list the five to eight technical competencies the role genuinely requires in the first ninety days, not the full laundry list from the job description. Everything downstream, including format, difficulty, and rubric, flows from this list.

Step 2 - Choose the Right Test Format (or Combine Formats)

Multi-measure testing consistently outperforms single-format assessments, because no one format catches everything. HackerEarth supports combining coding challenges, MCQs, and project-based tasks in a single candidate workflow, which means you can layer signal at each funnel stage without asking candidates to use three separate platforms.

Step 3 - Set Difficulty Level and Time Limits

A tech hiring assessment that is too easy produces a flat score distribution where everyone looks similar. Calibrate time limits to how long a proficient developer takes to complete the task comfortably, not how long an expert finishes it, because expert-speed limits create pressure that penalizes methodical thinkers over fast ones.

Step 4 - Use Anti-Cheating and Proctoring Measures

Assessment fraud doubled in 2025 and is not a hypothetical concern anymore. According to CodeSignal's 2026 research, cheating and fraud attempt rates for proctored assessments rose from 16% in 2024 to 35% in 2025, driven by unauthorized AI use, proxy test-taking, and plagiarism. HackerEarth's AI proctoring uses face detection, live monitoring, plagiarism checks, and keystroke pattern analysis to maintain integrity at scale, while also creating a behavioral record of how each candidate engaged with the problem, which itself becomes an evaluation signal.

Step 5 - Establish Scoring Rubrics and Benchmarks Before Reviewing

Rubrics finalized before any submissions are reviewed remove the bias that creeps in when scoring criteria shift based on what the first few candidates produced. A useful rubric for a programming test for hiring covers four dimensions: functional correctness, efficiency, code quality and readability, and edge case handling. HackerEarth's automated scoring covers all four with per-submission reports that include percentile benchmarks against the broader candidate population.

Step 6 - Pilot the Test Internally

Have two or three engineers on the relevant team complete the technical evaluation test under real conditions before it goes live. This catches time limit problems and ambiguous instructions before they affect actual candidates, and it creates reference submissions hiring managers can use when interpreting later scores.

What to Measure in a Developer Skills Assessment

Code Correctness and Efficiency

Correctness is the baseline, but efficiency is where the differentiation lives. A solution that works in O(n squared) time when O(n log n) is available tells you something meaningful about how a developer thinks at scale.

Code Quality and Readability

Code that works but that no teammate can read or extend without spending an afternoon deciphering it is not production-ready. Quality signals, including naming conventions, function decomposition, and absence of anti-patterns, matter especially for roles involving existing codebases.

Problem-Solving Approach

In live coding formats, the approach often tells you more than the solution. A candidate who clarifies requirements before writing, tests incrementally, and communicates their reasoning clearly is showing you how they will actually behave on the job.

Domain-Specific Knowledge

A software engineering test that ignores the tech stack the role uses is measuring general aptitude rather than job readiness. An IT skills assessment for a cloud infrastructure role should include provider-specific knowledge, not just generic systems concepts.

Speed vs. Depth Trade-Off

Speed is a weak proxy for competence in software development. The best technical interview tests give proficient developers enough time to complete the work carefully, then differentiate on quality and sophistication rather than who finished fastest.

How Non-Technical Recruiters Can Confidently Use Technical Assessments

Non-technical HR generalists should not have to interpret code to run an effective screening process, and with the right platform they do not have to.

Leveraging Auto-Scored Reports and Percentile Benchmarks

A platform worth using hands you a structured report with scores across each competency, a percentile rank against comparable candidates, and a pass or fail recommendation against the threshold your team set in advance. HackerEarth's candidate reports are built specifically for non-technical reviewers, which means a recruiter can make confident shortlist decisions without a senior engineer looking over their shoulder at every submission.

Collaborating with Hiring Managers on Interpretation

A clean working protocol eliminates most of the friction: recruiters advance candidates who meet or exceed the threshold automatically, flag the narrow band just below it for engineering manager review, and reject clearly below-floor candidates without escalating. This removes the calibration meetings that slow offers down.

Avoiding Common Misinterpretations

The two errors that come up most often are treating a strong score on a general coding challenge as sufficient evidence for a specialized role, and treating a low score as disqualifying when the test itself was poorly designed. Both are fixed at the design stage, not during review.

Technical Skills Test Best Practices for 2025

Prioritize Candidate Experience

A strong developer who is currently employed and fielding three other offers will not complete a two-hour assessment with unclear instructions. If your test would fail that basic gut check, it needs to be shorter, clearer, or more obviously connected to the actual job.

Ensure Fairness and Reduce Bias

Research by SHL in 2025 found that ML-based grading for technical tests increased the number of women who cleared coding simulations by 27.75% compared to traditional cut-off methods. Objective scoring, when properly designed, produces fairer outcomes as a side effect of removing evaluator subjectivity.

Keep Tests Job-Relevant

A technical screening test that measures skills the role does not require produces misleading data and wastes candidate goodwill. Relevance is what gives a score meaning, and removing off-topic questions is the single most reliable improvement most teams can make.

Iterate Based on Data

Every assessment deployment generates completion rates, score distributions, and eventually post-hire performance correlations. Teams that review this data quarterly and adjust their tests accordingly consistently produce better hiring outcomes than teams that treat assessment design as a one-time decision.

Combine Assessments with Structured Interviews

A technical skills test measures output. A structured interview measures thinking, communication, and judgment in a collaborative context. The most predictive hiring processes use assessment results to inform interview questions rather than treating them as separate events.

Comparing Top Technical Assessment Platforms

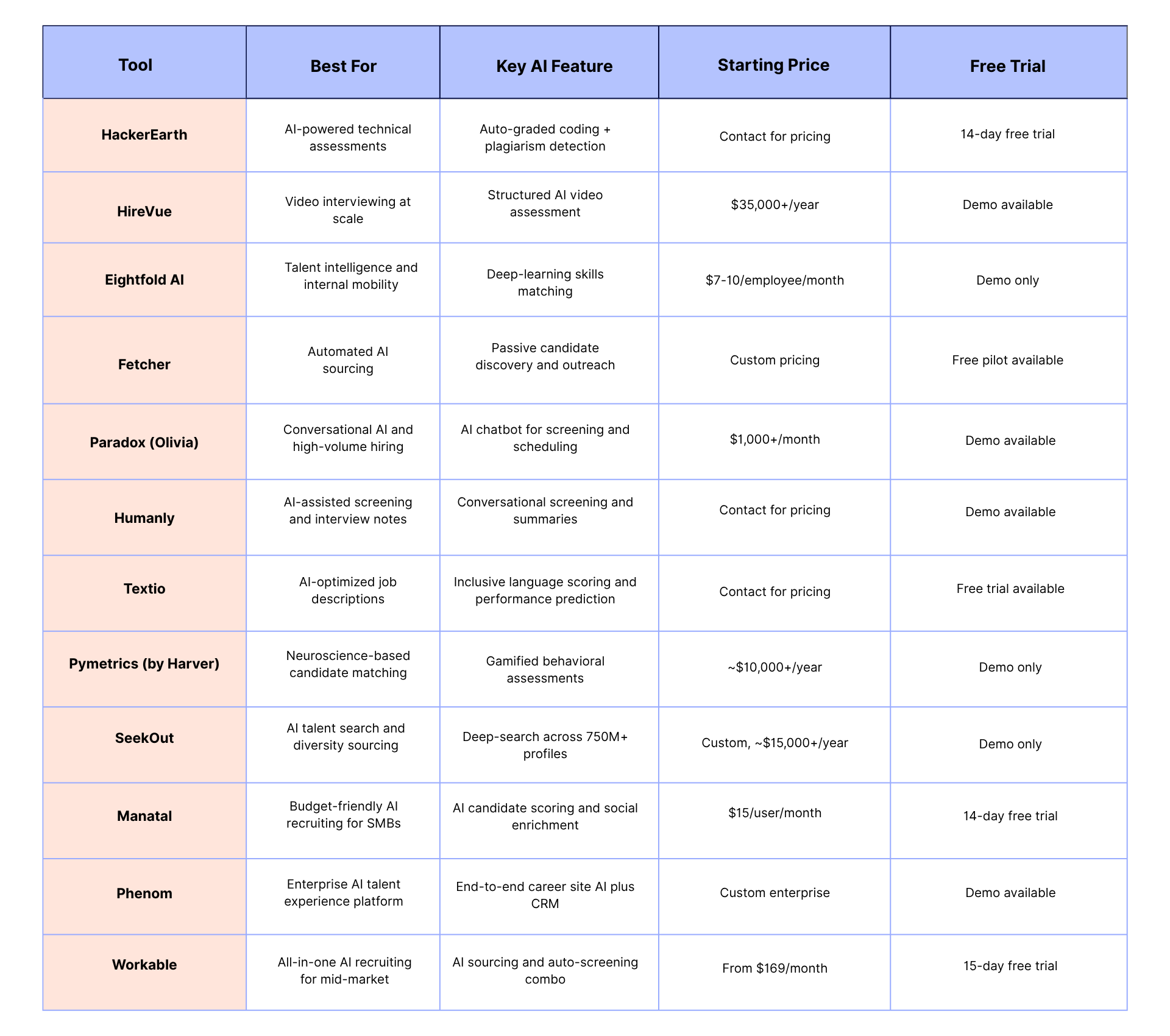

Every platform in this space has genuine strengths, and the right choice depends on your hiring volume, role mix, and how much your non-technical recruiters need to operate independently.

HackerEarth's practical advantage is that it covers the full workflow in one place. Where HackerRank is strong on algorithms and enterprise scale, HackerEarth adds live coding interviews through FaceCode, hackathon-based sourcing, and analytics without requiring a separate tool for each. For teams that want to stop stitching together point solutions, that consolidation is worth more than any individual feature comparison.

Conclusion

The technical skills test for hiring is not an optional layer on top of interviews. It is the mechanism that determines whether hiring decisions are based on evidence or on impressions. Resumes tell you what someone claims. Assessments tell you what they can do.

HackerEarth is built for the full scope of that problem: assessment library, live interviewing, AI proctoring, hackathon-based sourcing, and ATS integrations in one platform that non-technical HR generalists can operate without constant engineering manager support.

The most useful next step is running a technical assessment on your next open developer role and comparing the shortlist it produces to what resume screening alone would have given you.

See HackerEarth Assessments in action for your specific technical roles. Request a free demo and walk through the full candidate evaluation workflow with the HackerEarth team.

Try HackerEarth's assessment library for free with a 14-day trial, no credit card required. Access 17,000+ questions across 900+ skills.

Talk to the HackerEarth team about building a custom assessment for your next developer hire. Get role-specific test recommendations within 48 hours.

.png)